Engram

Side Project

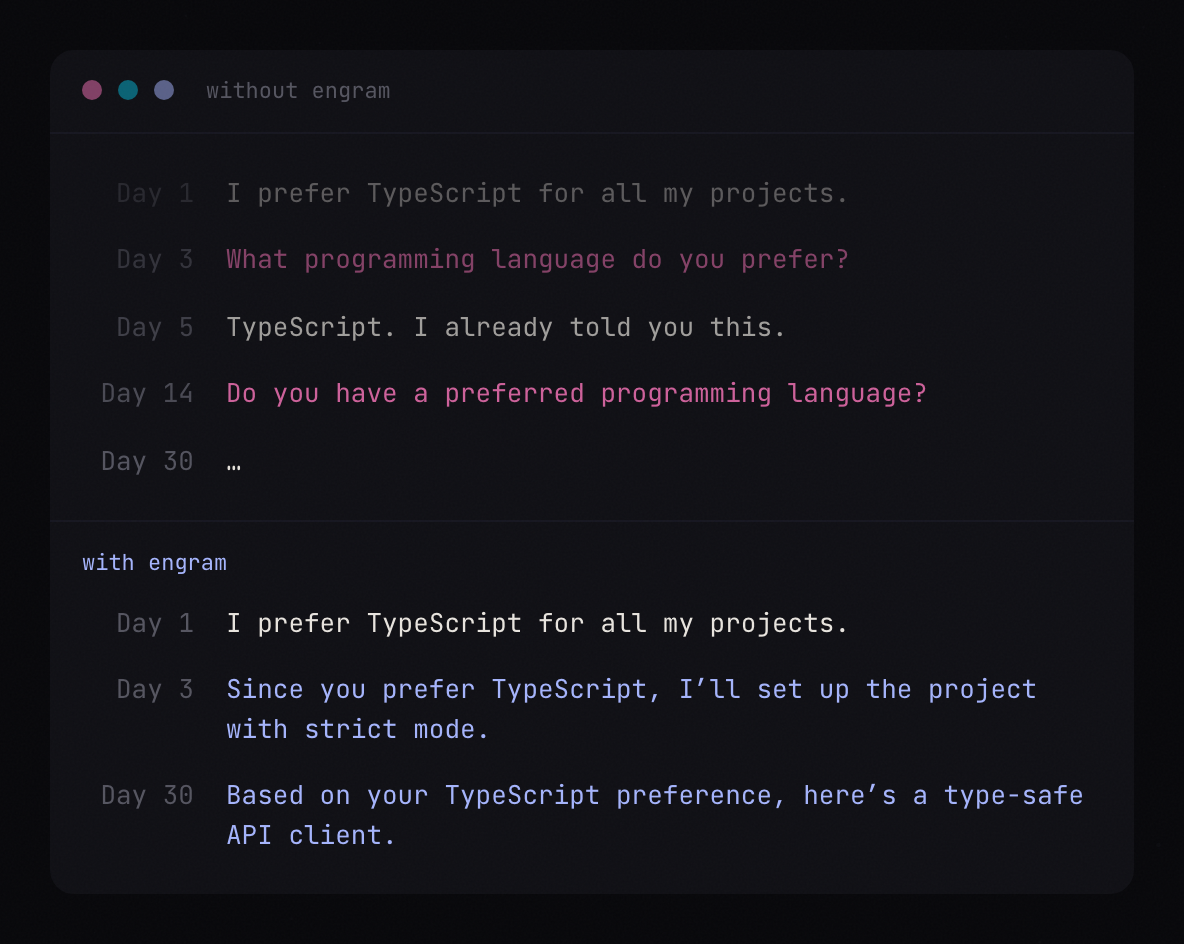

I asked my agent a simple question: "What's your biggest problem?" Its answer: "I wake up every session with no memory of who you are, what we've built, or what you care about. Every conversation starts from zero." That reframed everything for me. The agent is the user. I'm the business value. When you build a product, you solve problems for your user so they can deliver value to the business. Same principle applies here. If your agent can't remember context, can't connect ideas across sessions, can't build understanding over time, it can't deliver value to you. So I stopped optimizing for me and started optimizing for the agent.

Engram is a memory protocol for AI agents. Not another vector database. Not another RAG pipeline. It's modeled after how human memory actually works, built around three ideas that set it apart from everything else out there.

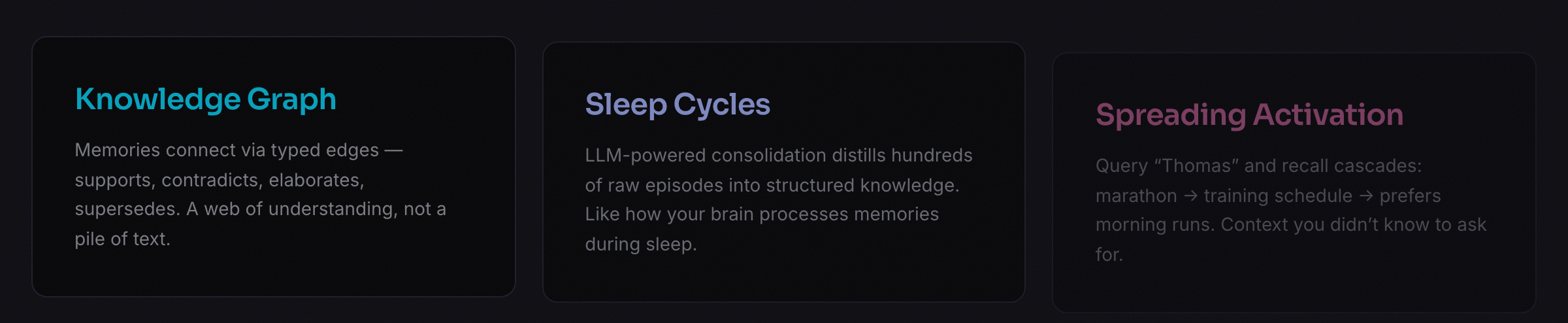

First, a knowledge graph where memories connect via typed edges: supports, contradicts, elaborates, supersedes. It's a web of understanding, not a pile of text. Second, sleep-cycle consolidation. An LLM distills hundreds of raw episodes into structured knowledge, discovers entities, finds contradictions, and decays what doesn't matter anymore. It's modeled after how your brain processes memories while you sleep. Third, spreading activation, where querying one memory cascades through the graph to surface related context you didn't know to ask for. Query "Thomas" and the system recalls marathon, training schedule, prefers morning runs.

The fundamental architectural decision was investing intelligence at read time instead of write time. Most memory systems, Mem0 included, try to extract and summarize when you store something, before they know what will matter later. Engram stores raw episodes cheaply and does the hard work at recall, when it actually knows the query. That turns out to matter a lot.

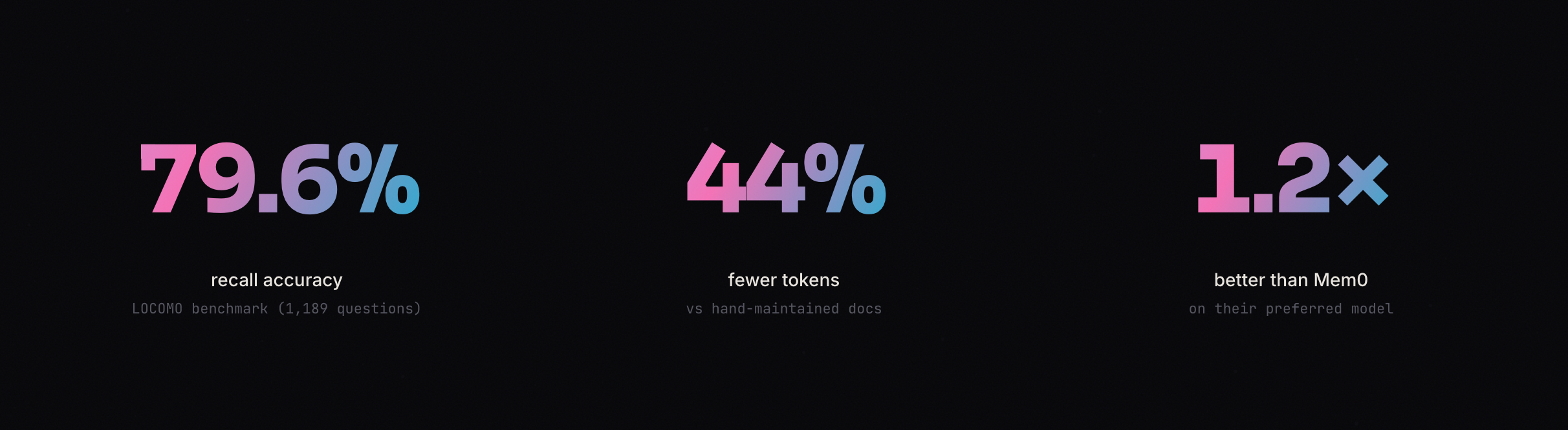

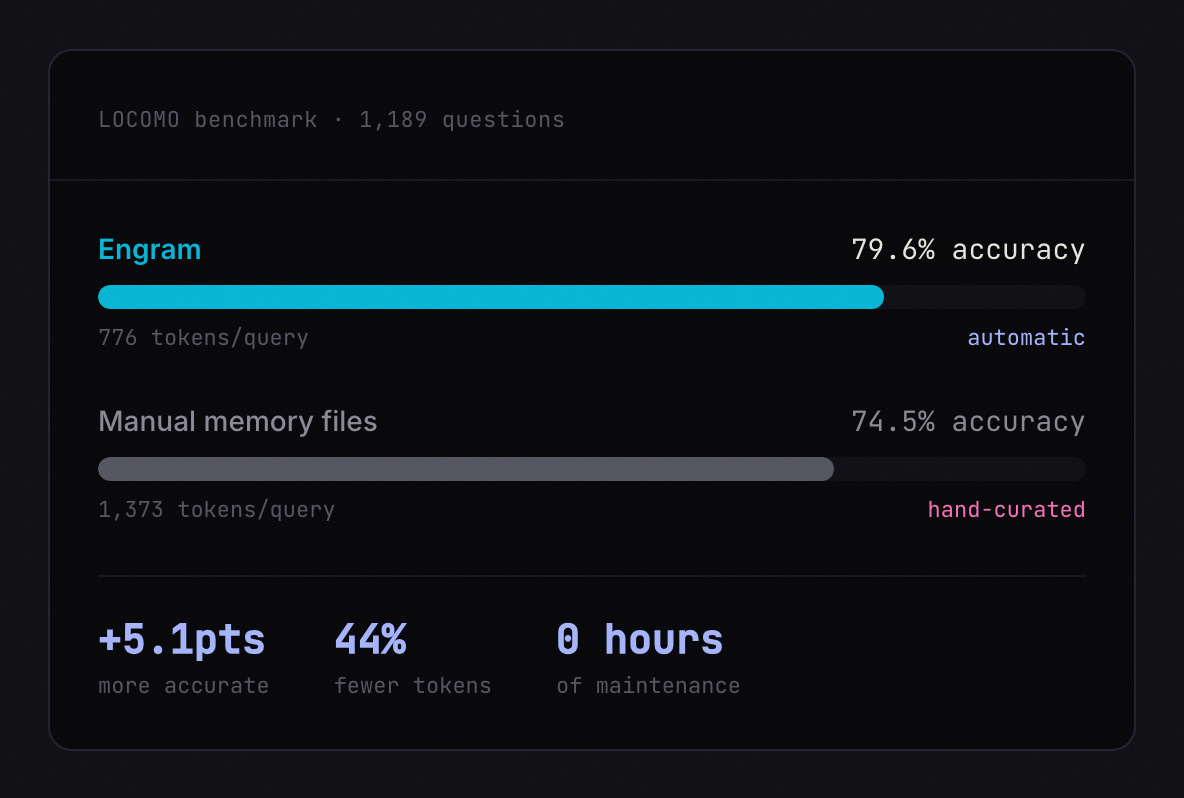

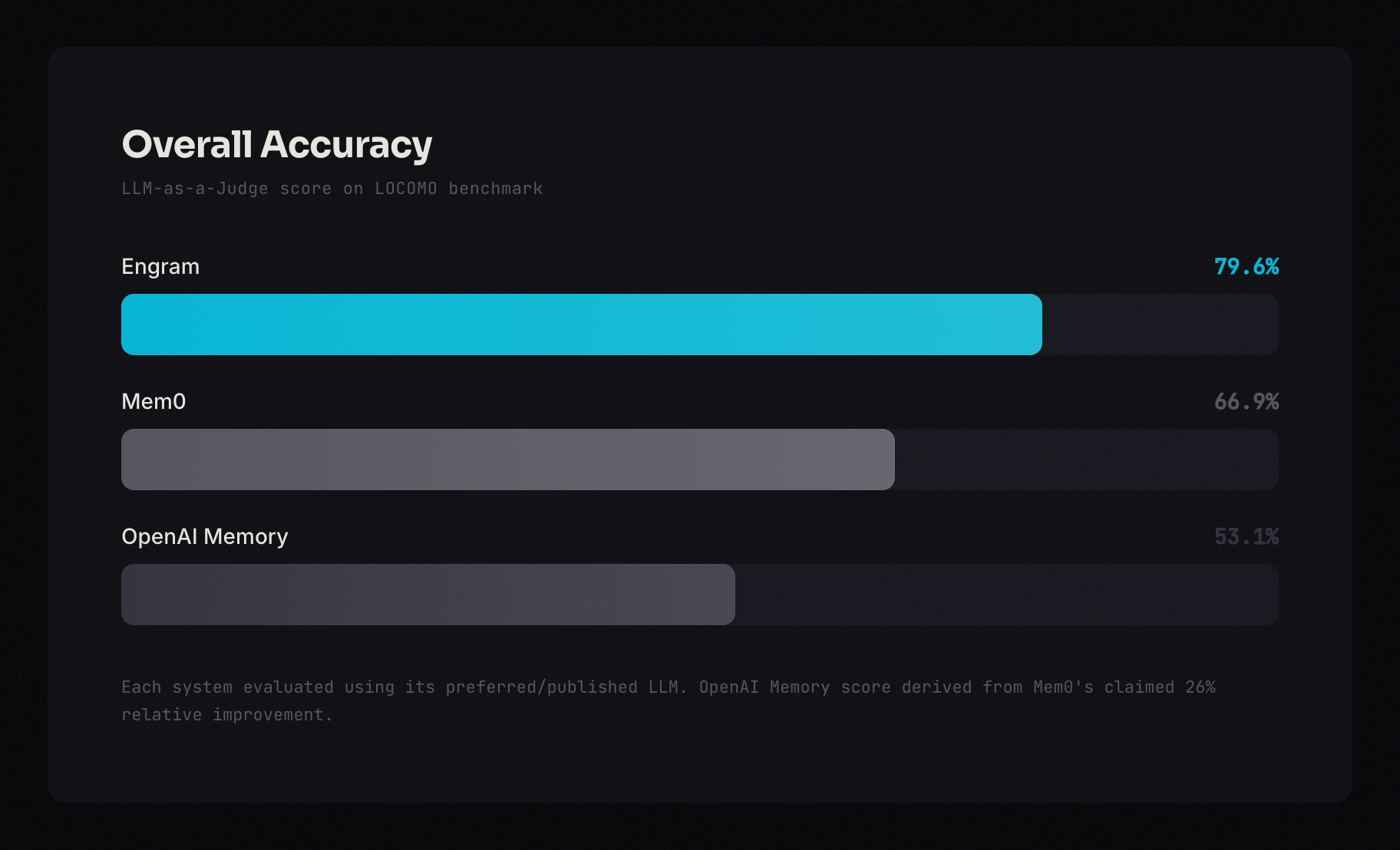

I ran it against LOCOMO, the standard benchmark for agent memory. 1,189 questions across 10 long conversations, the same benchmark Mem0 used to claim state-of-the-art. Engram scored 79.6% recall accuracy, compared to Mem0's published 66.9% and OpenAI Memory's 53.1%. It uses 776 tokens per query, a 96.6% reduction from stuffing full conversation history into the prompt, and 44% fewer tokens than hand-maintained memory docs. The highest scoring category was multi-hop reasoning at 83.1%, which is exactly where the knowledge graph and spreading activation shine, pulling together information from completely different conversations.

Setup takes two commands. `npm install -g engram-sdk`, then `engram init`. The init wizard detects your editor, writes the MCP config, and gives your agent 10 memory tools automatically. It works with Claude Code, Cursor, and any MCP-compatible client. There's also a REST API and a TypeScript SDK for custom integrations. Everything runs locally on SQLite with no cloud dependency, and the source is available on GitHub.

I use Engram every day through Claude Code. I built it because my agent deserved better. Yours probably does too.