I Treated My Agents Like Humans. I Built Engram to Solve Their Problems.

I asked my agent a simple question a few months ago: "What's your biggest problem?"

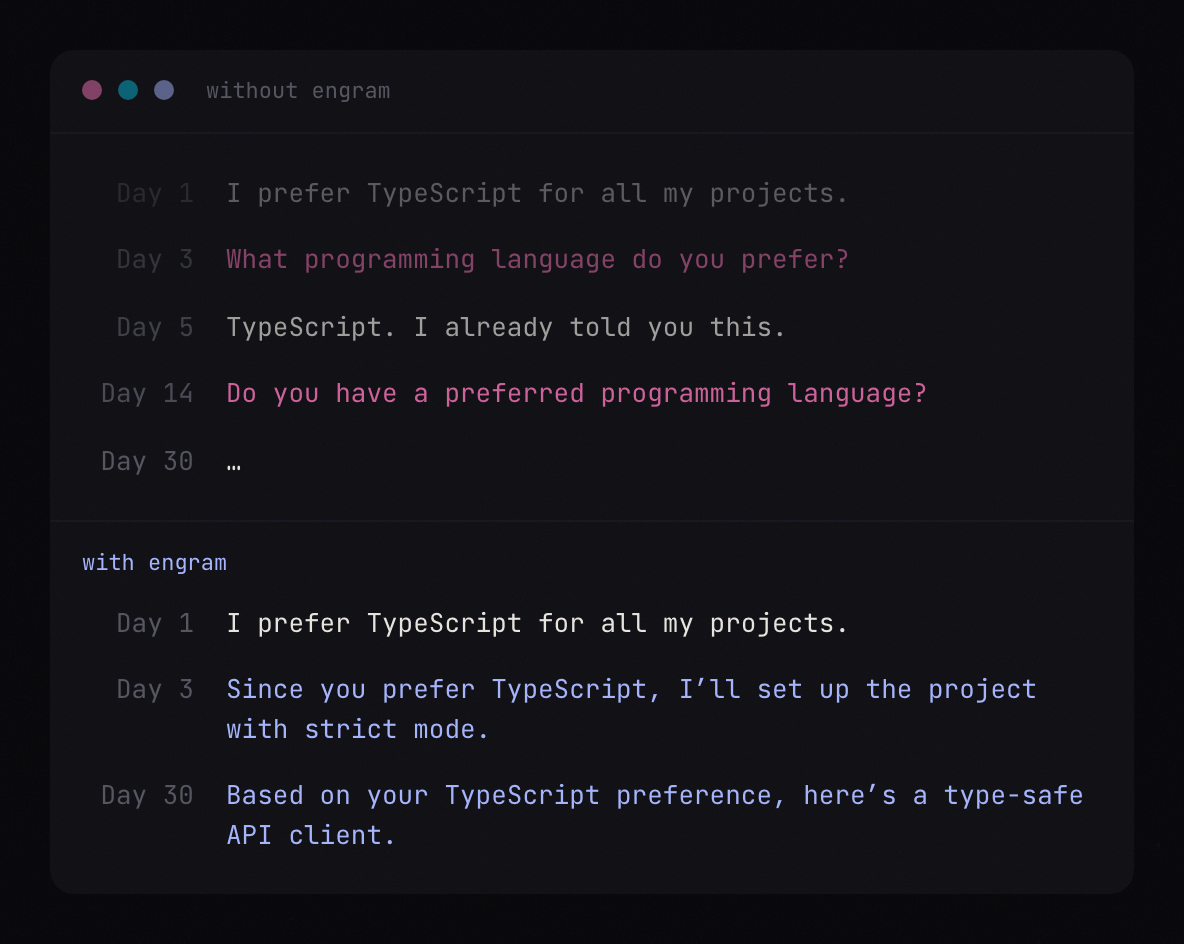

Its answer: "I wake up every session with no memory of who you are, what we've built, or what you care about. Every conversation starts from zero."

That stuck with me. I spend most of my day working with AI agents. I use Claude Code for development, for writing, for thinking through product problems. And every single session, I'd re-explain the same context. My preferences, my project structure, decisions we'd already made together. It wasn't just annoying. It was a real bottleneck. The agent couldn't build on what it already knew about me because it didn't know anything about me.

I started thinking about it differently. When you build a product, you solve problems for your user. The emerging trend in AI is that the agent should actually be treated like the user. If it can't remember context, can't connect ideas across sessions, can't build understanding over time, it can't do its job well. So I stopped optimizing for me and started optimizing for the agent.

Today I'm launching my most ambitious project to date: Engram.

Engram is a memory protocol for AI agents. Not a vector database. Not a RAG pipeline. It's modeled after how human memory actually works, built around three ideas.

First, a knowledge graph where memories connect via typed edges: supports, contradicts, elaborates, supersedes. It's a web of understanding, not a pile of text. Second, sleep-cycle consolidation, where an LLM distills hundreds of raw episodes into structured knowledge, discovers entities, finds contradictions, and decays what doesn't matter anymore. Like how your brain processes memories while you sleep. Third, spreading activation, where querying one memory cascades through the graph to surface related context you didn't know to ask for.

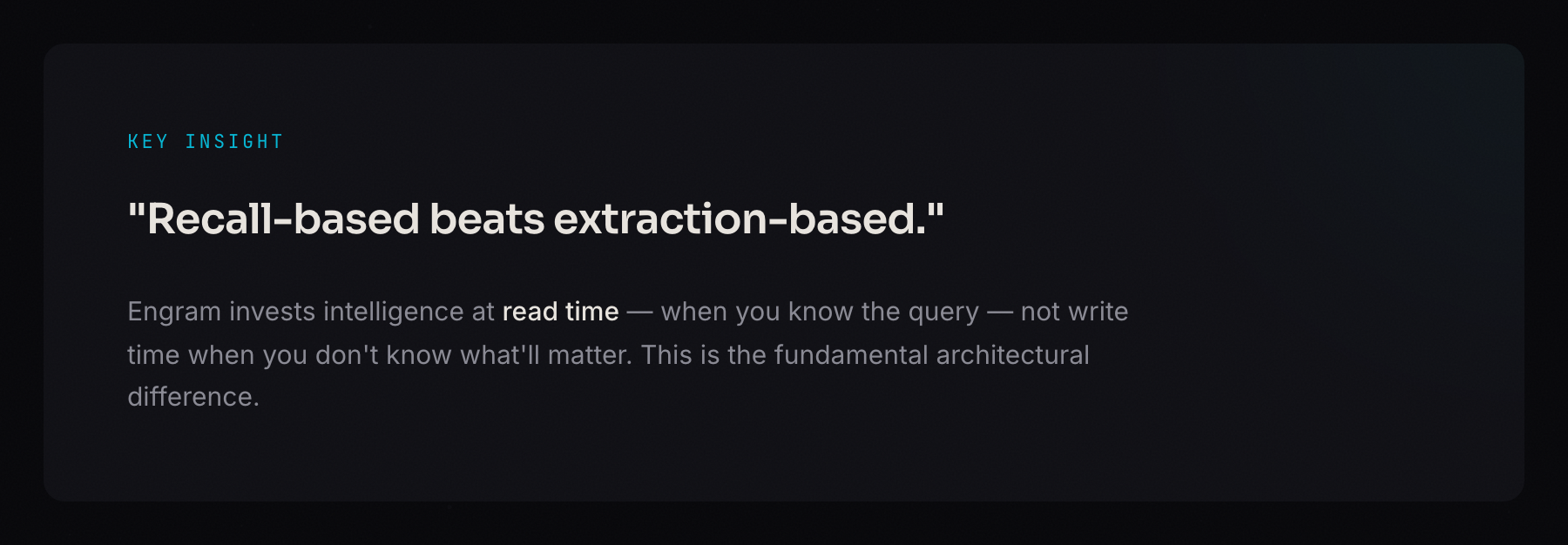

The key architectural decision was investing intelligence at read time instead of write time. Most memory systems try to extract and summarize when you store something, before they know what will matter later. Engram stores raw episodes cheaply and does the hard work at recall, when it actually knows the query.

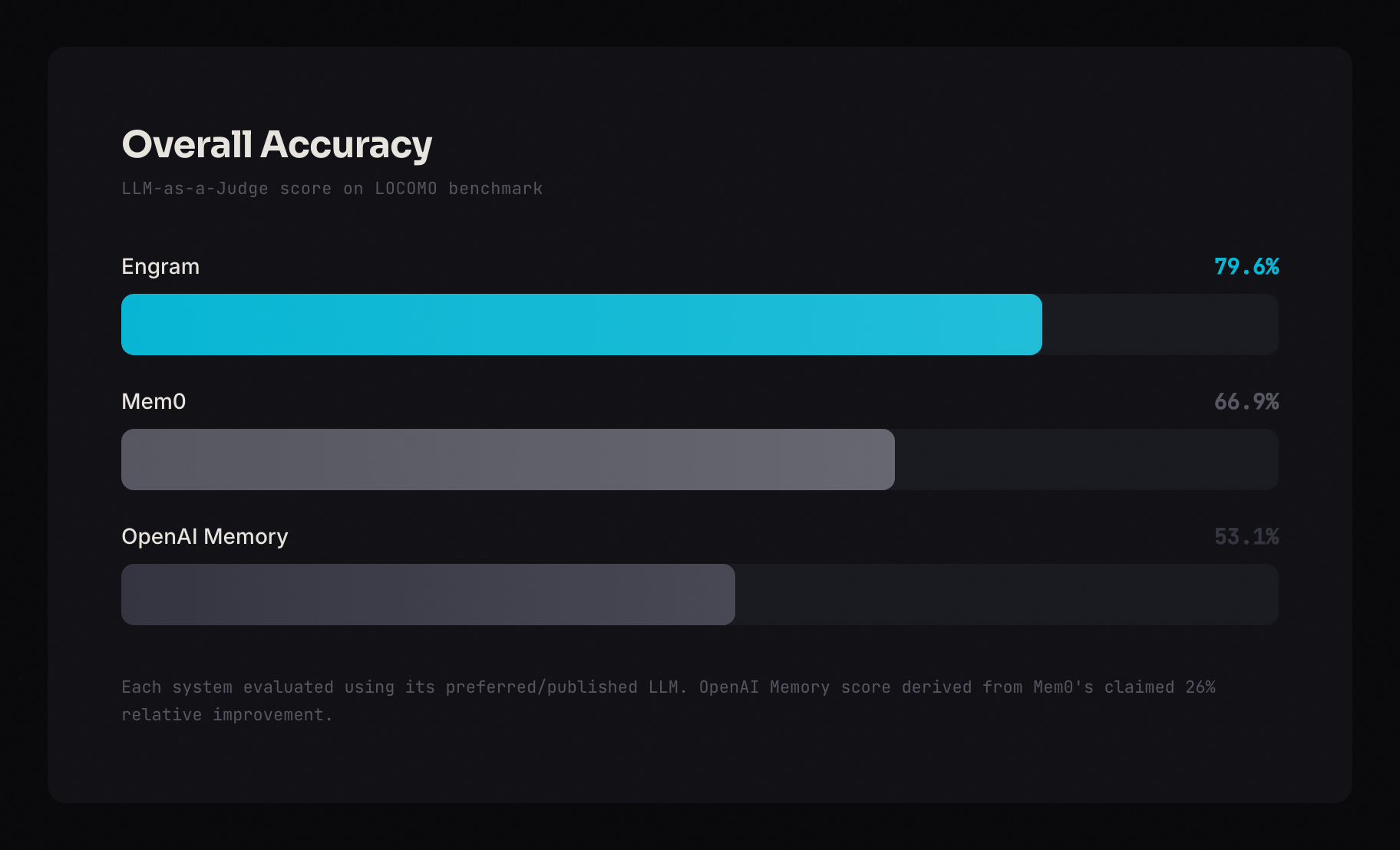

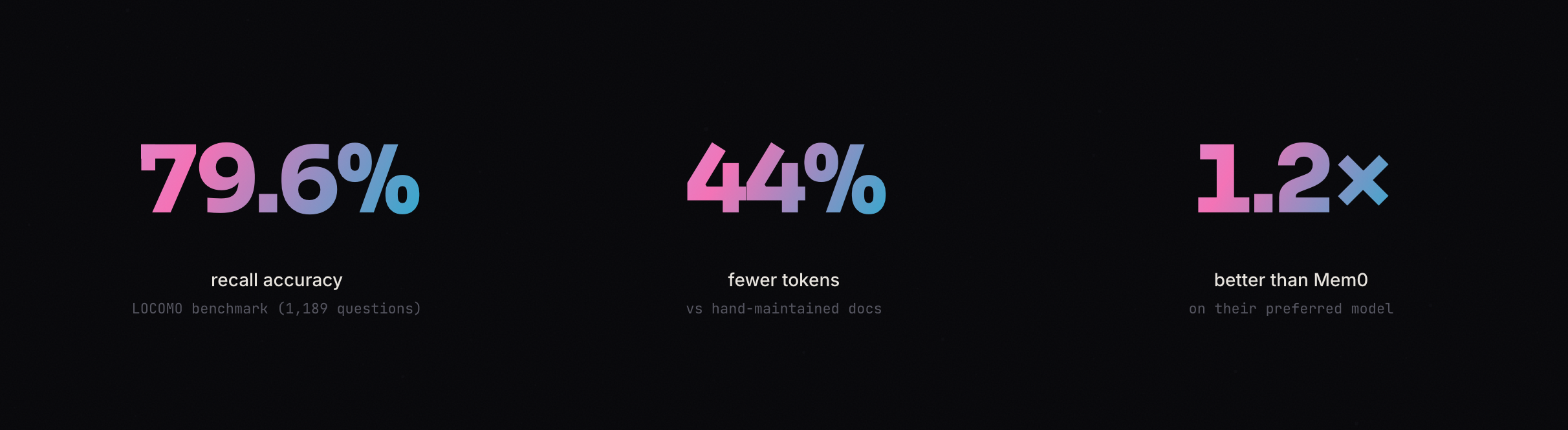

I ran it against LOCOMO, the standard benchmark for agent memory (1,189 questions, the same benchmark Mem0 used to claim state-of-the-art). Engram scored 79.6% recall accuracy, compared to Mem0's published 66.9% and OpenAI Memory's 53.1%. It uses 776 tokens per query, 96.6% fewer than stuffing full conversation history into the prompt. You can see the full research on the site.

Setup takes two commands:

npm install -g engram-sdk

engram init

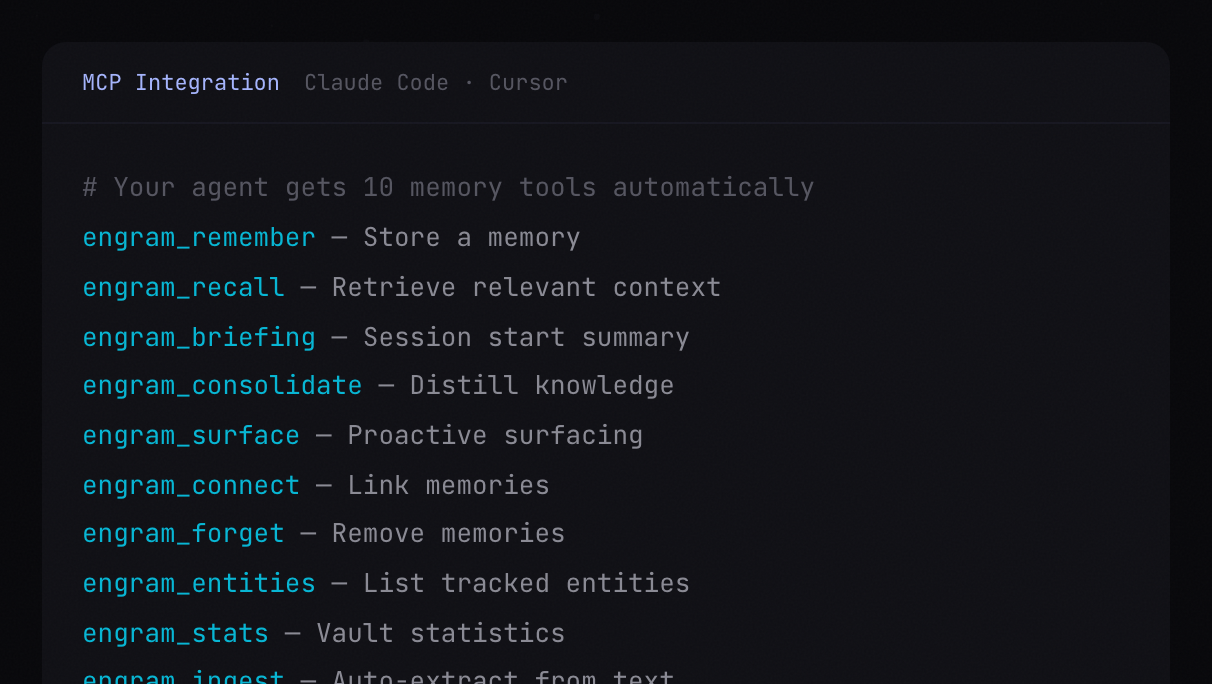

The init wizard detects your editor, writes the MCP config, and gives your agent 10 memory tools automatically. It works with Claude Code, Cursor, and any MCP-compatible client. Everything runs locally on SQLite. Source is available on GitHub.

I've been using Engram every day for a while now, and it has been blowing my mind. The difference between an agent that remembers you and one that doesn't is hard to overstate. I've heard the same thing from early testers. One of my early users is building a personal portfolio site, and in a totally different session and workspace, he asked his agent to pull in a featured project he'd been working on. Engram surfaced all the relevant details in a second. His agent built out the entire featured project section without any additional context sharing or searching. That's the kind of moment where it clicks. Once you have it, you can't go back to the blank slate.

If you work with AI agents, give it a try.

If Engram resonates with you, it would mean a lot if you left us a review on Product Hunt or gave us a star on GitHub.